Delivering Meaningful Employee Performance Feedback

“42.7 percent of all statistics are made up on the spot.” — Steven Wright.

“Nobody’s looking for a puppeteer in today’s wintry economic climate.” — John Cusack. Being John Malkovich.

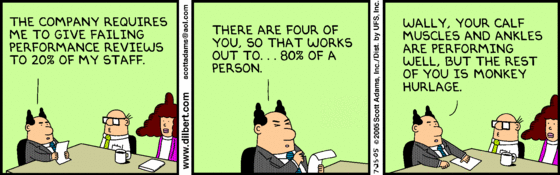

I received another request for a blog from a colleague recently: “Here is ONE that almost no one addresses honestly — The Employee Review System, the review process, the outcome, and its intended/non-intended outcome on the morale of an employee. This topic applies to human kind in any industry and one that pretty much EVERY company gets totally wrong…”

This topic has received a lot of attention recently as more and more large tech companies abandon a formal review process or at least abandon review scores that pigeonhole employees into pre-ordained buckets in order to meet budgetary constraints.

Such processes usually start with the employee giving him/herself a score and then writing some text to justify that score. In my experience, most high performing employees ignore or minimize the effort, either not writing any text or writing a small bulleted list of their accomplishments, the rationale being that the manager already knows (or should know) what they’ve been working on based on regular interactions, so any time spent writing it up is a waste of time and effort.

By contrast, low performing employees spend entire days collecting data to highlight their efforts, writing long essays on why they should get a high score, sometimes even asking peers to write recommendations for them. Great, just what we need: low performers spending even less time doing real work.

Most managers tend to do as little as possible during the process, sometimes just entering a review score into the system, at most writing a few sentences when hounded by their HR partners. The entire process is viewed as bureaucratic and a burden by most involved. If we are talking about a large corporation that enforces a fixed distribution, the managers then spend many hours huddled with their peers in calibration meetings, trying to highlight the accomplishments of the their team members and arguing for higher scores.

There are several major problems with this approach.

Many first level managers don’t have any prior management experience and have little context with which to calibrate their scores. Most also don’t have enough employees in their teams to meet the required distribution. Believing that their team members all performed above and beyond the call of duty or simply trying to retain all their employees, they come into calibration meetings with a distribution that would only make sense in Lake Wobegon!

“Welcome to Lake Wobegon, where all the women are strong, all the men are good-looking, and all the children are above average.” — Garrison Keillor. A Prairie Home Companion.

Calibration meetings thus become a battleground where every imagined problem (be it a bug introduced by the employee or an unanswered email message or a perceived slight) is dragged out and used as an excuse to knock down review scores for people in other groups — since that’s the only way my employees have a chance to get a higher score in a zero-sum game. In the case of large organizations, due to time constraints, you end up concentrating primarily on the top and bottom performers and ignoring the bulk of the employees in the middle of the pack — the ones who really need your help.

I remember spending entire days in such calibration meetings while at Microsoft. Needless to say, these multi-hour meetings usually end up being a forum for n-1 attendees reading their email, surfing the web, stepping outside to deal with burning fires, and otherwise occupying themselves while one person delivers a lengthy sermon on the virtues of their team members. These episodes are only interrupted by the next presenter’s startled look as he reluctantly tears his eyes away from his laptop: “Ah, it’s my turn to talk about Jack now?”

Most of these shenanigans are performed simply because there’s a fixed budget for raises and bonuses with strict guidelines pertaining to each performance score: Someone getting a score of 3 on a scale of 1–5 can’t get more than a 2.5% raise, for example. And no more than 10% of employees can get a score of 4 or 5.

Using labels such as “Needs Improvement”, “Meets Expectations”, “Exceeds Expectations”, and “Excels” is really no different than a score of 1–5 when it comes to evaluating employee performance nor is it any more conducive to healthy feedback and mentoring.

The biggest problem with these labels or scores is that, once you have handed them out, it is extremely difficult to change them in the next cycle without causing morale problems: “What do you mean I only exceeded expectations? My last two scores were ‘Excels’ and I worked just as hard this time.”

There you go, destroying the morale of a top performer. Instead of concentrating on performance improvement, the discussion turns into a tug of war between the employee and the manager, the former trying to highlight accomplishments while the latter weasels out of responsibility and begs forgiveness: “I gave you an ‘Excels’ but it was lowered by upper management.”

In the worst cases, HR needs to get involved, the disgruntled employee writes a rebuttal, and the whole process drags on for months. Often, the employee ends up leaving the group or the company as she feels her contributions are not valued and she can get a better deal elsewhere.

Another problem with such forced distributions is that they are nonsensical as you move up the organizational ladder. It makes no sense to compare the contributions of a kernel engineer to those of a UI developer or a tester (I’ll give you one guess as to who will come out at the bottom on that one every day of the week) nor does it make sense to compare junior employees to senior ones. And it makes even less sense for the arbiters of such decisions to be at the top levels of the company — where those disciplines typically meet in a large organization. They don’t have enough visibility.

At my last job, at a startup, we used a very simple model (based on an article in the Harvard Business Review) that worked well: KiSS (Keep/Start/Stop doing). The idea is for the manager to write an email to the employee: “Here are a couple of things I’d like to see you keep doing, a couple of things you’re not doing that I think you should start doing, and a couple of things you should stop doing.” No forms, no labels or scores, no long essays, no calibration, no forced distribution.

I asked all managers in my team to run the emails by me so I could provide feedback based on what I’d seen independently, and to make sure weak and inexperienced managers don’t pull any punches when dealing with low performers.

The emphasis here is obviously on providing actionable feedback, reinforcing good behavior, and highlighting negative traits that need to be changed in order for the employee to succeed. I found that the simplified process forced us all to be more transparent and avoided morale crushing discussions about why you are a “3” instead of a “4” this time around.

Such an email should take no more than fifteen minutes to write and no more than half an hour to discuss in person. Such a lightweight process, I believe, also lends itself well to more frequent checkins. Why wait for a heavyweight annual process if you can offer honest feedback once a quarter?

Let’s cut to the chase. The idea of performance reviews is great and necessary in healthy organizations. The implementation often sucks because it turns an opportunity for mentoring and honest feedback into a forced statistical distribution problem informed primarily by budgetary concerns.